Introduction

DeepSeek V4, long awaited, has finally arrived. Just hours ago, the preview version was released and open-sourced. Coincidentally, OpenAI also launched GPT-5.5 on the same day. One continues with a closed-source productivity system, while the other focuses on open-source, long context, and low-cost inference. The two largest foundational model companies in the US and China crossed paths on the same day.

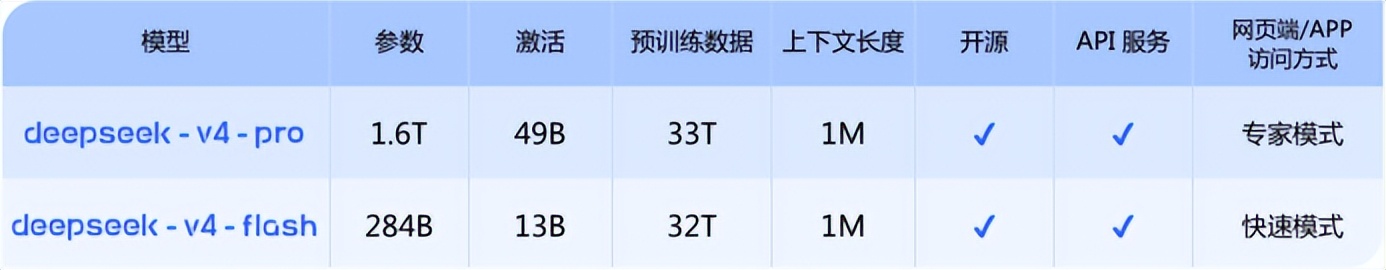

DeepSeek V4 comes in two versions: Pro and Flash, both supporting an ultra-long context of one million tokens, with total parameter scales of 1.6 trillion (activating 49 billion) and 284 billion (activating 13 billion) respectively.

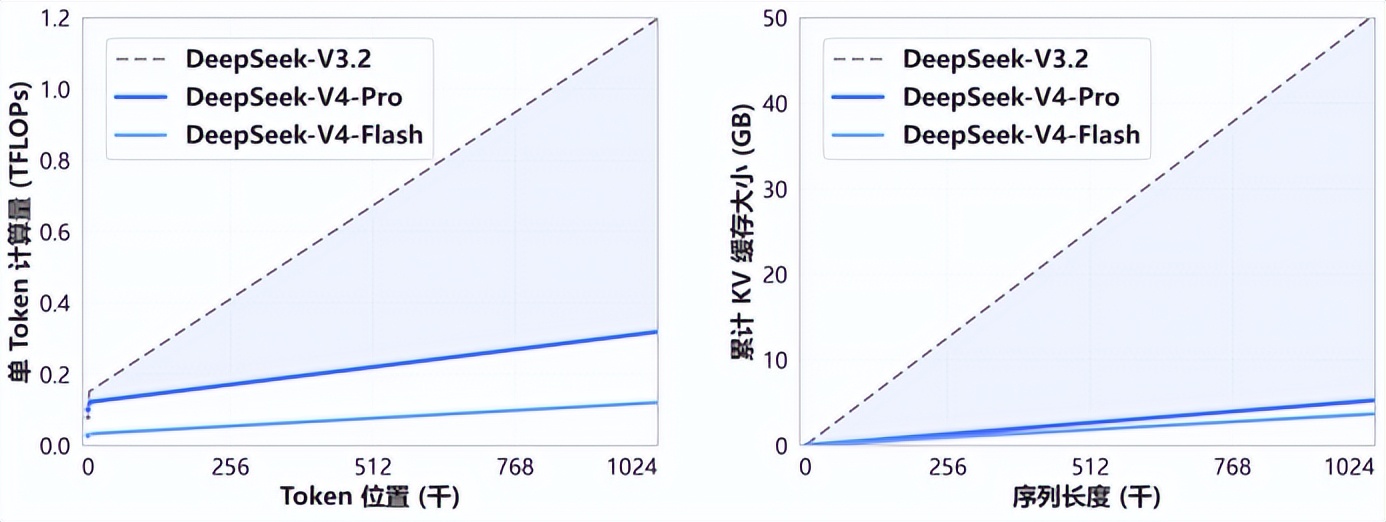

However, compared to the exaggerated figures of “1.6 trillion parameters” or “one million token context,” two numbers in the technical documentation are more noteworthy: 27% and 10%. According to the introduction of the V4 series on HuggingFace, in a one million token context scenario, the single token inference FLOPs of V4-Pro is only 27% of that of V3.2, and the KVcache is only 10% of V3.2.

In simpler terms, in the context of processing ultra-long materials, V4 not only “can hold it,” but also runs faster and is cheaper. This may be the most noteworthy aspect of the V4 update.

Efficiency Over New Features

In the past six months, long context has become a common selling point among leading models. Claude, Qwen, Kimi, and GLM are all moving towards long texts, code repositories, and agent tasks, while DeepSeek has focused on the most expensive part of long text scenarios: computation and caching.

Regrettably, V4 currently lacks native multimodal capabilities, which limits its performance in certain scenarios. Thus, the keyword for V4 is not the long-awaited “new species” in the industry, but rather a further step in “efficiency engineering.”

Reflecting on the past, DeepSeek has never followed the route of “sexy” products. In the ocean of soaring token calls, what V4 needs to uphold is the ambition of this super unicorn with a valuation of $20 billion.

Speed and Limitations

In 2026, it is no longer surprising that large models support long contexts. However, another question arises: can the model continue to work efficiently when processing ultra-long texts and chains? If a model only looks at a few paragraphs, answering questions is not difficult. But if it has to review an entire code repository, dozens of contracts, or months of meeting records, the difficulty increases exponentially.

The single token inference FLOPs of V4-Pro is only 27% of that of V3.2, and the KVcache is only 10% of V3.2, which directly addresses this issue. The former indicates the computational load required to generate each token, while the latter indicates KVcache usage, which can be understood as the model’s “working memory” needed when processing long texts.

The longer the text, the heavier this working memory becomes. If the model has to carry a complete burden at every step, it becomes difficult to remain agile. Thus, speed is key. Here, speed does not refer to answering a few seconds earlier in a chat window, but rather the operational efficiency in long text tasks. After consuming 1 million tokens, can the model still run efficiently and support high-frequency calls?

This point is also reflected in the newly launched GPT-5.5, where many ChatGPT users have noted that the response speed of GPT-5.5-Thinking has improved significantly.

Given the current popularity of agent workflows, this efficiency improvement is even more critical. System-level agent tools, including OpenClaw, often need to read files, check information, call tools, modify code, save intermediate states, and continue to the next step based on feedback.

The more realistic the task and the longer the context, the more the computational and caching burden snowballs. Many agent products today seem futuristic, but when it comes to cost, they can feel disastrous. If V4 can indeed reduce operational efficiency under long contexts, it will impact the entire cost structure of the agent toolchain.

Practical Tests with DeepSeek V4

We at Letter AI had a simple hands-on experience with DeepSeek V4 Pro, setting up a basic offline environment and running two tests that closely resemble everyday user scenarios.

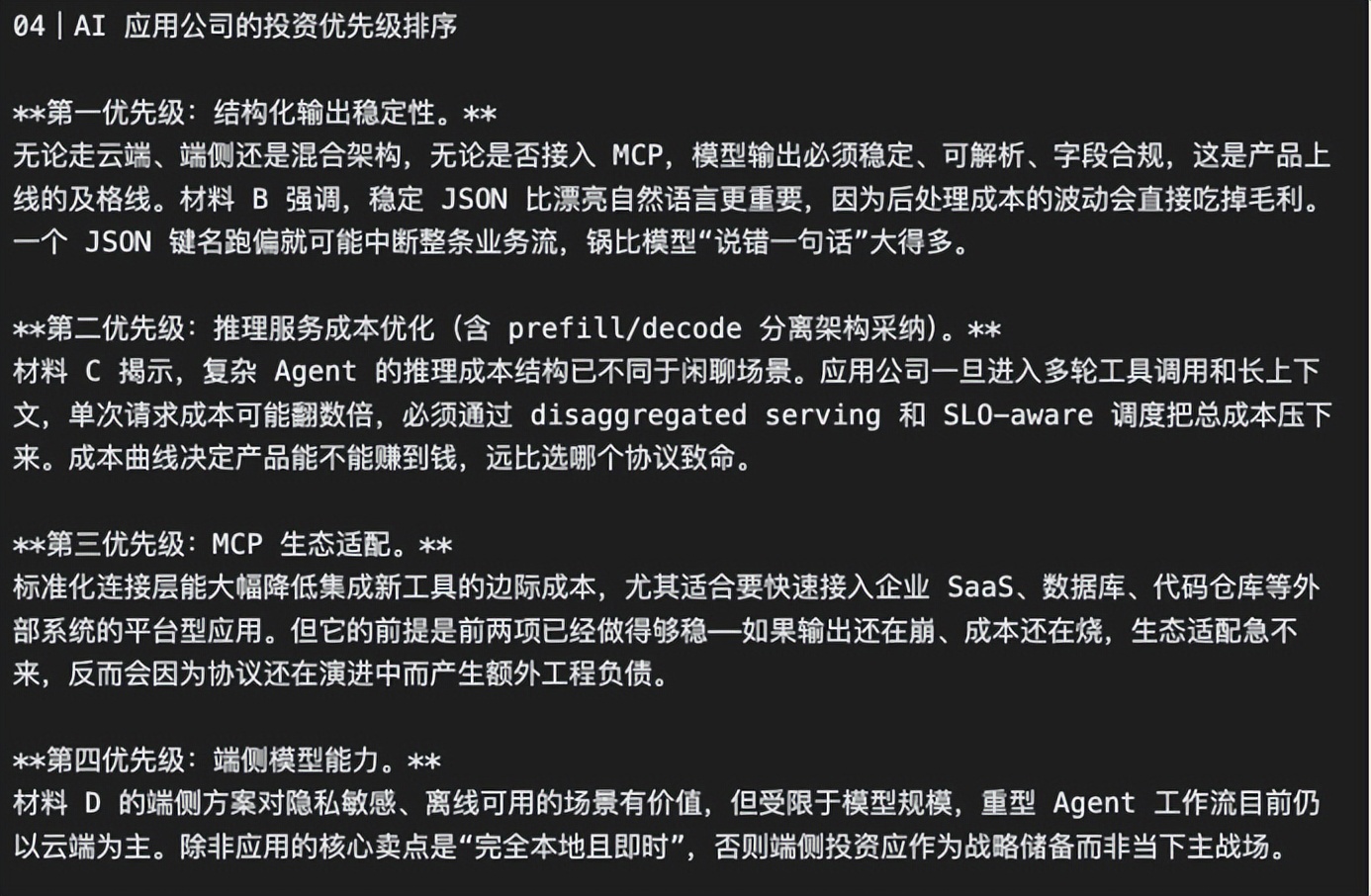

First, we provided V4 Pro with a set of materials about MCP, structured output, tool invocation, edge-side models, and inference services, asking it to write a technical analysis. This task primarily tests whether the model can organize a bunch of concepts and terms into a clear engineering diagram.

V4 Pro performed like a seasoned technical editor. It did not reiterate the materials point by point but grasped a main line: the competition among agents is not just about model parameters, but how the model can stably connect to external systems. In other words, the model should not only be able to “think” but also read files, query databases, invoke tools, and write results back to business systems.

It understood structured output as “making the model speak in a way that machines can directly understand” and MCP as “making it easier for the model to access external tools through standard interfaces,” which is closer to real products than merely explaining terms.

The second test involved asking it to write a local command-line tool in Python to manage daily collected AI industry news tips. The prompt was simple, with just a few basic constraints: no internet connection, no API calls; it should be able to add, view, filter, deduplicate, automatically score news value, and export a markdown daily report.

V4 Pro directly provided a runnable small tool. Users can input company, title, type, source, link, time, text, and verification status. The program automatically calculates the news value score and categorizes the tips into “directly quotable,” “needs further verification,” and “not adopted for now.” The exported markdown is also grouped by hierarchy, retaining dimensions like company, title, type, score, and source.

This test illustrates that V4 Pro can break down a relatively complex intention into structure, rules, and executable code, aligning with DeepSeek’s past user mindset.

On developer channels like OpenRouter, the DeepSeek V3 series has already proven its cost-effectiveness and user inertia. Data from OpenRouter shows that the DeepSeek V3 series token consumption exceeded 7.27 trillion in 2025, ranking fifth, only behind models like ClaudeSonnet4 and Gemini2.0Flash. Even today, the call volume of DeepSeek V3.2 remains high on the OpenRouter leaderboard.

This indicates that user recognition is not solely based on benchmarks but on whether a model is stable, affordable, and efficient in real workflows.

This can also be observed in Claude’s case. In comparisons between ClaudeOpus4.6 and GPT-5.4 series on various model capability rankings, the conclusion does not always favor Claude, and in some knowledge, reasoning, and speed metrics, GPT-5.4 performs better.

However, this does not prevent Claude from continuing to capture the developer and enterprise market. Anthropic revealed in February of this year that, based on the then-revenue pace, the company’s annual revenue scale had reached $14 billion, with a growth rate exceeding tenfold over the past three years.

Thus, to objectively assess a model’s capabilities, one must consider its actual engineering performance in real workflows.

Limitations of V4

Of course, V4 is not without its shortcomings. The biggest regret is its current lack of “native multimodal” capabilities. Even before its release, the community’s expectations for V4 were not limited to a text model. Some media had previously reported that DeepSeek V4 was planned to be a multimodal model capable of handling images, videos, and text generation.

The absence of multimodal capabilities indeed poses a real problem. Once visual understanding, chart analysis, and processing of PPT/web/software interfaces are involved, it reaches the model’s capability boundaries.

Today’s productivity tasks are no longer just about “reading a piece of text.” Many users are genuinely dealing with images, tables, screenshots, PDFs, web pages, video conferences, and complex software interfaces. Without native multimodal capabilities, V4 can still serve as a powerful foundation for long tasks, but it is not yet a complete entry point for work.

From another perspective, standing at the crossroads of financing and IPO, V4 primarily addresses the foundational issues for its parent company rather than constructing a complete building.

DeepSeek at a Financing Crossroad

Another backdrop to V4’s release is the sudden influx of financing news regarding DeepSeek. Clearly, as a rare species in the Chinese AI industry, DeepSeek has never been short of funds.

In the past, one of DeepSeek’s most recognizable tags was that it did not push forward like typical AI unicorns relying on financing narratives. It has the backing of quantitative fund company Huanshan and a figure like Liang Wenfeng, who has maintained a mysterious yet focused image in the industry for a long time.

However, the situation has begun to change recently. Latest reports indicate that DeepSeek is seeking financing at a valuation of over $20 billion, with companies like Alibaba and Tencent reportedly in talks to invest. The specific numbers are still under negotiation, but the direction is clear: DeepSeek has reached a point of welcoming the capital market.

V4 is an important lever at this juncture. The focus on efficiency in V4 actually captures the most concerning aspects for the current developer community, and predictable demand for calls may be further amplified, driving more commercial applications.

This is also the most challenging hurdle for DeepSeek ahead. The $20 billion valuation must prove not only the strength of the model but also whether it can transform into a stable commercial system.

Competitors are already taking action in this regard. Qwen, GLM, and Kimi are all moving towards agentic coding, tool invocation, and long task execution, while Claude has made enterprise knowledge work and code workflows its most important commercial focus.

Clearly, relying on V4’s capabilities, DeepSeek still needs more product-level implementations. Agents cannot run solely on the foundational model; they also require browsers, file systems, permission systems, enterprise software interfaces, plugin ecosystems, and product experiences. Even if V4 solves the foundational issue, how to establish a user ecosystem for productivity scenarios is a question Liang Wenfeng and his team need to consider next.

Thus, the most accurate positioning of V4 is not as a new species of model as people imagine, but as an elevation of the “open-source model task foundation” to a new height.

In the past, DeepSeek has proven that Chinese companies can create strong models at lower costs. V4 must demonstrate whether this low-cost route can continue to hold firm in the era of one million contexts, agents, domestic computing power, and simultaneous commercialization.

Currently, V4 has played the efficiency card. The next question DeepSeek must answer is whether this card can support the commercial scale of a $20 billion company.

Comments

Discussion is powered by Giscus (GitHub Discussions). Add

repo,repoID,category, andcategoryIDunder[params.comments.giscus]inhugo.tomlusing the values from the Giscus setup tool.